Weekly Composition Post — "The Raindrop"

(Poster image crop from original by "Malta Girl" used under CC 2.0 license)

This week's (well, technically the week of April 19th) track is "The Raindrop".

It should have been posted on April 26th, but I didn't have time to start that track until Saturday, and when I did sit down to do it, I was just... I had a really bad mental health week that week and wasn't in a place to write music. Plus, as Maddy Myers reminded me when I talked about it with her later, "writing a song in a week is hard actually?"

It did get done, though, and I want to talk about how that happened. If you've listened to my other music, especially my earliest stuff from a couple years ago, and also followed me on socials, you may recall that 1.) I was using GarageBand on the iPad, and 2.) talked a lot about my complicated feelings using the "Drummer Tool" in GB. Well, because GB is a psyop intended to get you to buy Logic Pro (it worked), the "Drummer Tool" has a more complicated equivalent in Logic: the "Session Player" option.

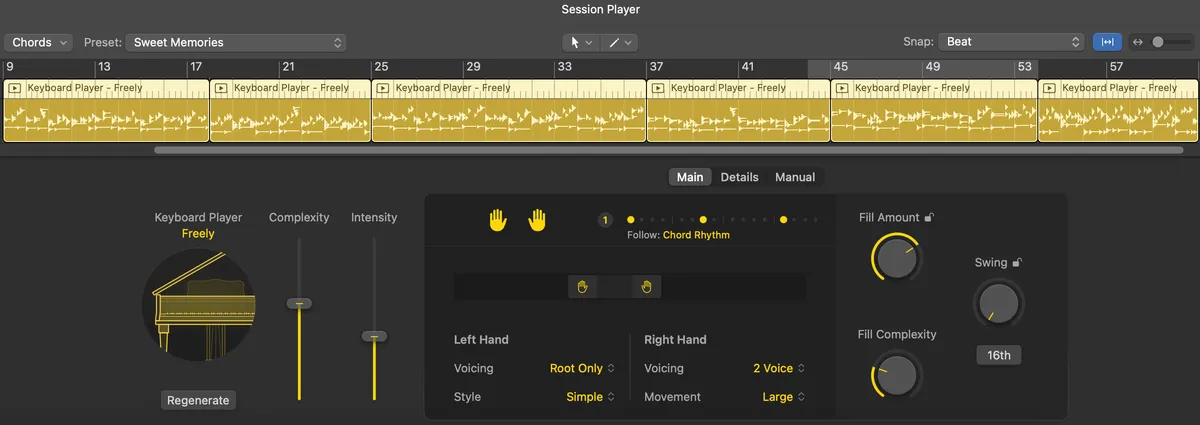

"Session Players" are basically automatic accompaniment. Logic offers a session player for keyboards, bass, and drums. At the most basic, a session player track follows the beat, key signature, and chords of your song. It can also 'follow' other tracks and respond to them; in my GB songs, for example, I'd often have the drummer follow a rhythmic line like the bass.

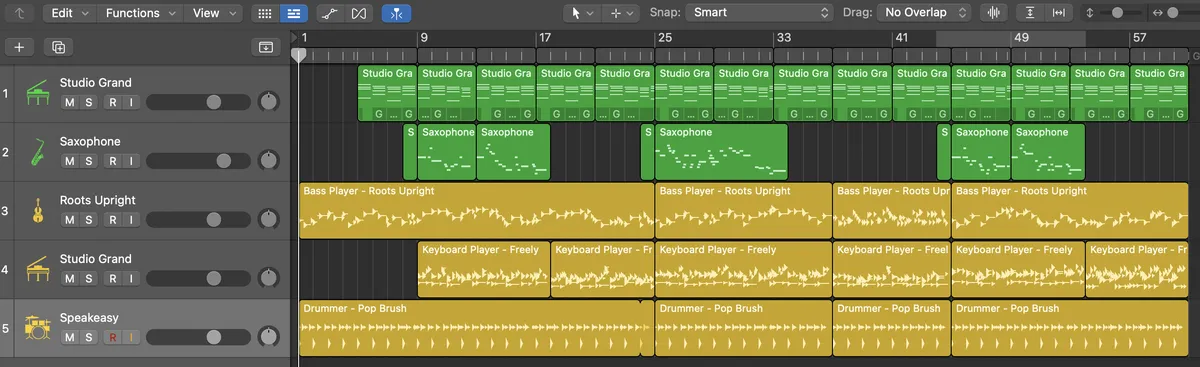

In "The Raindrop," the only tracks I fully composed by hand were the saxophone and the base piano chords. The drums, bass, and moving piano were all session players.

The green tracks here are software MIDI instruments I wrote by hand, note by note; the yellow ones are session players that are following along. A session player track's options are varied; this is an example of the piano session player's options. It's a lot, and there's two entire tabs of stuff in addition to this (including the hilariously named "Humanize" dial).

When I first started composing, I would liberally use the drummer tool in GB, or sample loops provided in the app (i.e. royalty free stuff the app hands you) when making songs... and it really, really bothered me. In the classroom (my day job being college professor), I don't let students use "generative AI" or LLMs, etc. on their work, and my opinions on the matter are well-documented.

I kept wondering, though: am I not doing the same thing? Yeah, I have to tweak a bunch of knobs and dials to get session players to work, but how is that different from "prompt engineering"? On the other hand, if using sample loops is the same as using AI, then isn't the entire music industry kind of in trouble?

I poked at using session players in "The Raindrop" because I was feeling blocked, but worse, I was feeling sad and defeated and it was getting in the way of composing even simple things, like some brush drums in the background that weren't even that complicated. It did take work, too; splitting the track into sections so you can dial complexity up or down as needed, change settings so the track is dynamic across the song, etc. takes time and effort. It felt impossible not to ask myself, however: shouldn't I just spend that effort doing it myself? Am I cheating?

This bothered me. Like, really bothered me, perhaps because so-called "AI" encroaches more and more on my professional life in a bad way every day, and I didn't want to be the hypocrite saying "do as I say, not as I do."

It was my friend Dr. Isaac Schankler, who I was chatting with about this, who gave me some useful perspective on this. I said that once I finally wrangled the session players, they sounded decent, and I was annoyed by that. It felt like cheating.

With their permission, I'm going to quote Dr. Schankler directly:

I mean it is and it isn't. What I tell students is that your music should feel like your own and the more you outsource decisions like that, the more generic your music will be. But it's a spectrum and everyone has a different line that feels ok to them. Like, on a spectrum between playing and recording every single note yourself and AI-generated music, you're fine

I think the song I ended up making bears this out, too. It all sounds... fine? If I'm being real, part of me kind of doesn't like this song, because while it has a sort of polished sound, it's also... very dull, very expected. I probably could have produced the bass and drum lines by myself without too much effort, but the moving piano line... it sounds like somebody else is guesting on my song and it has nothing to do with me.

Isaac went on to say "maybe try to learn from what the session player is doing so you can see what it's doing, and bring some of yourself to it." I think that's a good outlook (and with the GB drummer tool, I was doing that, even if I wasn't terribly successful at it).

Increasingly, even if I've been let off the hook of thinking this is "the same as using AI" by a professional (aheh), I still think this situation points at what the age of "generative AI" is making me feel: increasingly, I want the things I do to have my stamp on them. I want people to hear (or read, or whatever) the things I put in the world and know they were something I did. Otherwise, what's the point?